Modern digital experiences are defined by one thing: speed.

When a website loads instantly, a video streams without buffering, or an app responds in milliseconds, users barely notice the infrastructure behind it, until it fails. Behind the scenes, billions of connected devices are generating massive amounts of data every second. Systems such as an NTP server, which keeps network devices synchronized with accurate time, also operate quietly in the background to support reliable digital operations, often alongside high-density hardware such as a blade server in modern data center environments.

However, traditional centralized cloud systems are designed for scale, but not always for real‑time performance. That’s where network edge servers play a transformative role.

According to Gartner, by 2025, up to 75% of enterprise data will be created and processed outside traditional centralized data centers, compared with just 10% previously, reflecting a dramatic shift toward distributed computing to meet performance demands.

Edge servers bring compute power closer to users and devices, minimizing delays and improving responsiveness for a wide range of applications, from streaming and gaming to autonomous vehicles and industrial IoT.

This guide explores what edge servers are, how they work, why they matter, and how organizations use them today.

What Is an Edge Server?

An edge server is a computing server placed closer to end users or data sources, rather than relying solely on centralized cloud data centers located far away. By processing data locally, edge servers reduce latency, speed up response times, and minimize network congestion.

Edge servers are foundational components of edge compute platforms, a distributed architecture that supports real‑time applications and workloads that can’t tolerate delays. In contrast with traditional servers hosted in remote data centers, edge servers handle compute resources where it’s most effective- near users or devices.

These servers show up in networks that demand speed and performance, such as:

- Content delivery networks (CDNs)

- Streaming services

- Online gaming platforms

- IoT deployments

- Smart city infrastructure

- Real‑time analytics systems

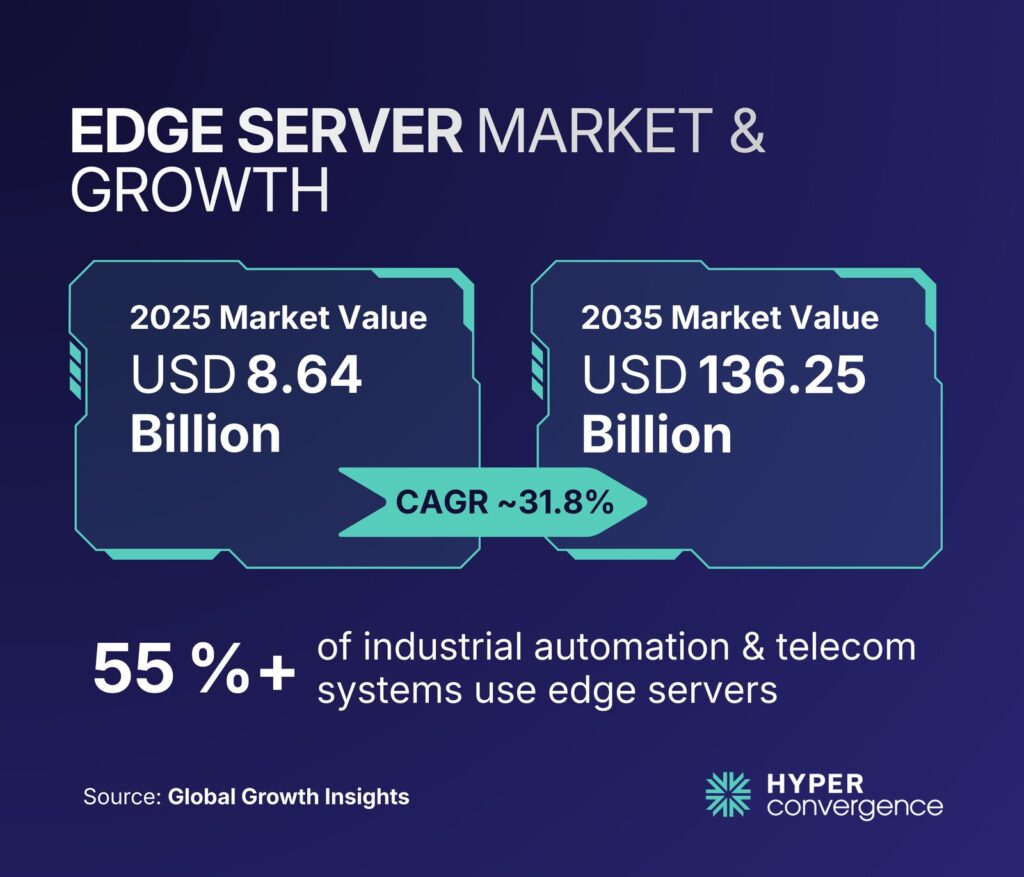

The edge server market is expanding rapidly. In 2025, it was valued at approximately USD 8.64 billion and is projected to grow to USD 11.38 billion in 2026, with expectations to exceed USD 136 billion by 2035 at a compound annual growth rate (CAGR) of more than 31%.

This rapid growth reflects the increasing global demand for real‑time computing, real time monitoring, low‑latency connectivity, and distributed processing closer to users.

Why Edge Servers Exist

Edge servers exist to solve a simple problem: distance adds delay.

When a user in one region requests content from a server that sits far away, the request has to travel through many networks. Even small delays can add up fast, especially for workloads that need tight timing.

Edge servers reduce the travel distance and the number of network hops. They also help reduce congestion on central systems.

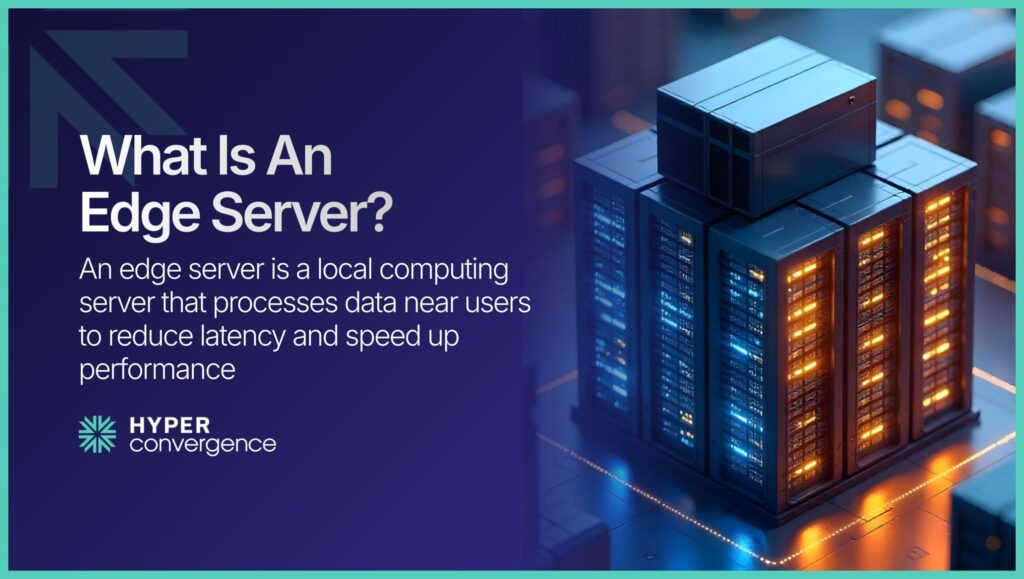

Key Characteristics

Key characteristics of an edge server include:

- Proximity to data sources: Edge servers reside in regional edge facilities, telecom base stations, or on-premises locations such as factories or retail stores. Their placement reduces the distance that data must travel, delivering quicker responses for time‑sensitive applications.

- Local compute and caching: They can process data locally and cache frequently requested content. For example, a streaming platform can cache popular videos on edge servers to deliver them quickly to nearby viewers.

- Reduced workload on origin servers: By handling requests at the edge, they decrease the load on centralized origin servers and help balance network traffic management.

- Improved security and sovereignty: Processing sensitive data locally limits exposure to outside networks and supports data security and compliance with regional data regulations.

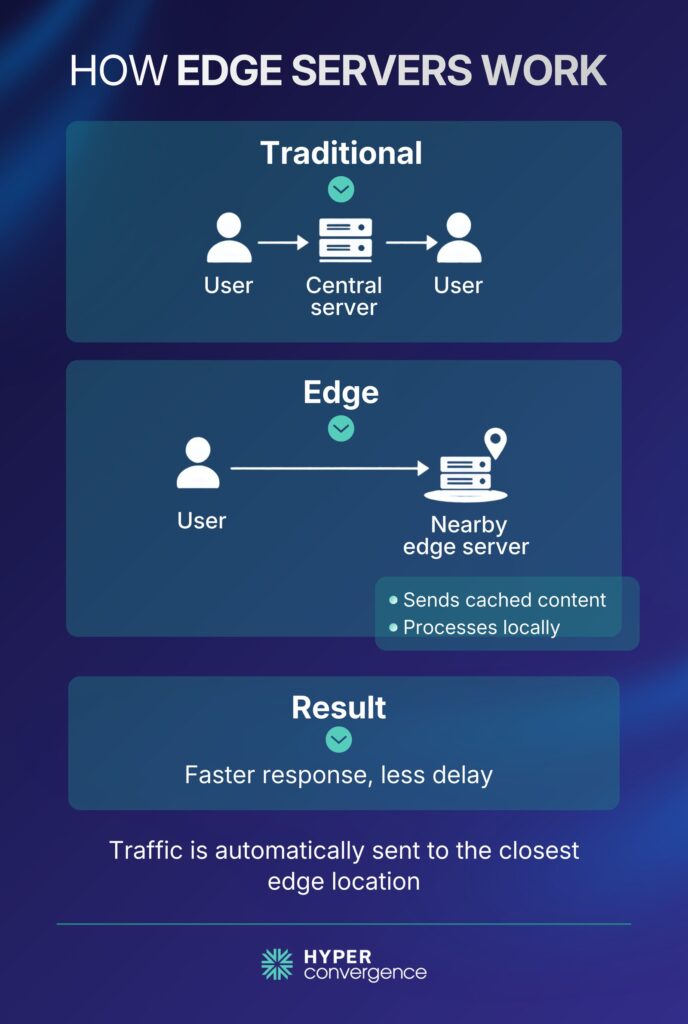

How Edge Servers Work: Centralized vs Edge Models

Edge servers sit between users and central infrastructure, but the exact path depends on the architecture.

Traditional Centralized Model

In a classic setup:

- A user sends a request

- The request travels to a centralized data center or cloud region

- The origin server processes the request

- The response travels back to the user

This model works well for many workloads, but it can be slow for time-sensitive tasks.

Edge Model

In an edge model:

- The request routes to a nearby edge location

- The edge server responds in one of two ways

- It serves cached content

- It runs local compute and returns a response

- Only the required data goes to the origin or central cloud

This improves time-to-first-byte and overall responsiveness for many user-facing apps.

How Traffic Reaches The Nearest Edge Server

Most edge networks use routing and request steering methods that try to send users to the nearest point of presence. At a high level, this usually depends on:

- DNS-based routing that points users to an edge location

- Anycast routing that directs traffic to a nearby network location

- Health checks that avoid unhealthy nodes

- Load balancing rules that spread traffic across available capacity

You do not need to memorize these terms to use edge servers. The key idea is simple: the network tries to handle requests close to users.

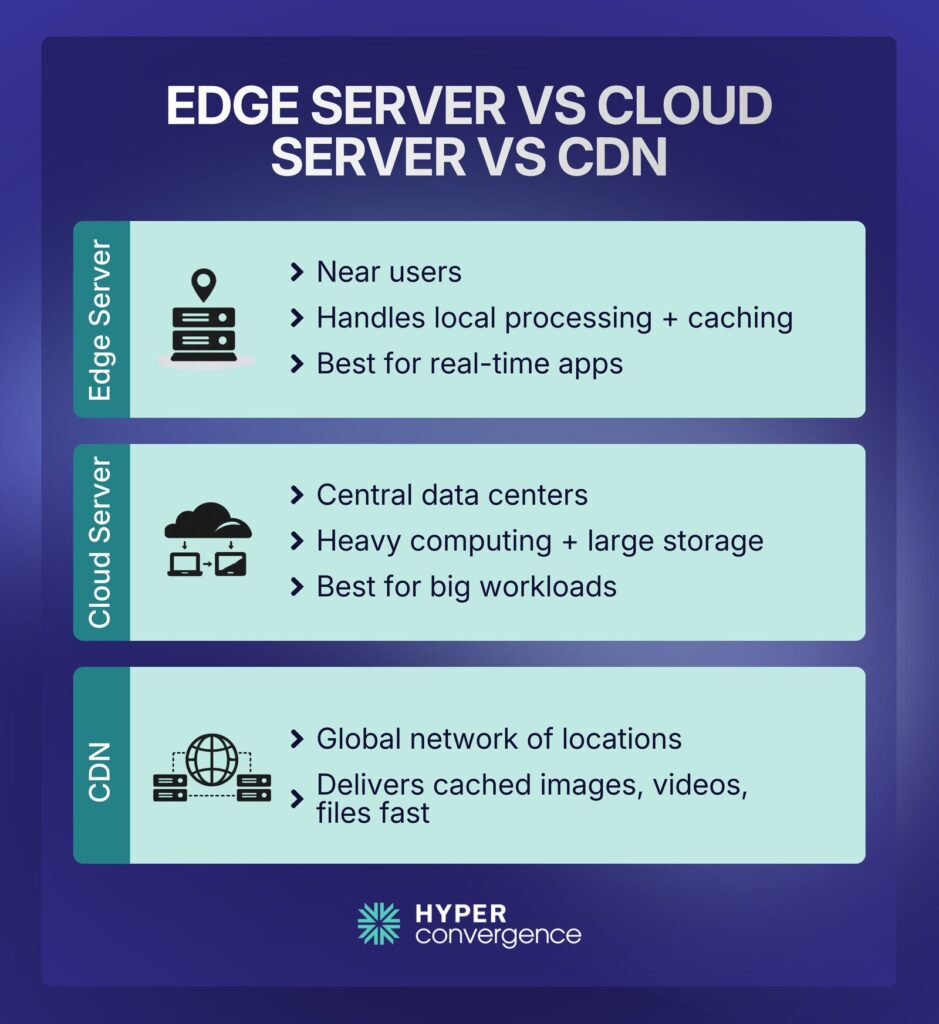

Edge Server vs Cloud Server vs CDN: Conceptual Differences

These terms are often used interchangeably, but they represent distinct concepts. The following table summarizes the main differences:

| Feature | Edge Server | Cloud Server | CDN |

| Primary Goal | Provide local compute resources and storage to minimize latency | Deliver scalable compute and storage from centralized data centers | Distribute cached static content globally for faster delivery |

| Location | Near users/devices in regional, on‑premises, or device‑edge sites | Centralized data centers anywhere in the world | Distributed network of PoPs across regions |

| Typical Tasks | Local processing, caching, analytics, and security | Heavy computation, large‑scale storage, centralized analytics | Serving static assets (e.g., images, videos) from cache |

| Best Use Cases | Real‑time applications (gaming, AR/VR), IoT analytics, autonomous vehicles, industrial automation | Large enterprise workloads, batch processing, global SaaS platforms | Web and media content delivery, software downloads |

| Reliant On | Distributed edge nodes and specialized hardware | Central cloud infrastructure and virtualization | Edge servers within PoPs linked to origin servers |

What Matters In Practice

- A CDN is usually edge infrastructure optimized for caching and delivery at scale

- An edge server can do CDN-like caching, plus compute and security functions

- A cloud server is still the best place for large-scale storage and heavy computing

However, many real systems use all three.

Hybrid Edge and Cloud Strategies

Edge servers do not replace cloud servers. Most teams use both.

A practical pattern looks like this:

- Edge handles fast, localized work

- Cloud handles centralized analytics, large storage, and heavy processing

Common hybrid examples:

- A retailer runs point-of-sale and in-store analytics at an edge location, then sends summaries to a cloud data warehouse

- A manufacturer runs machine monitoring locally, then trains forecasting models in the cloud

- A bank uses edge services for web request handling and security checks, while core processing stays in protected central environments

In reality, hybrid setups help balance responsiveness and scale.

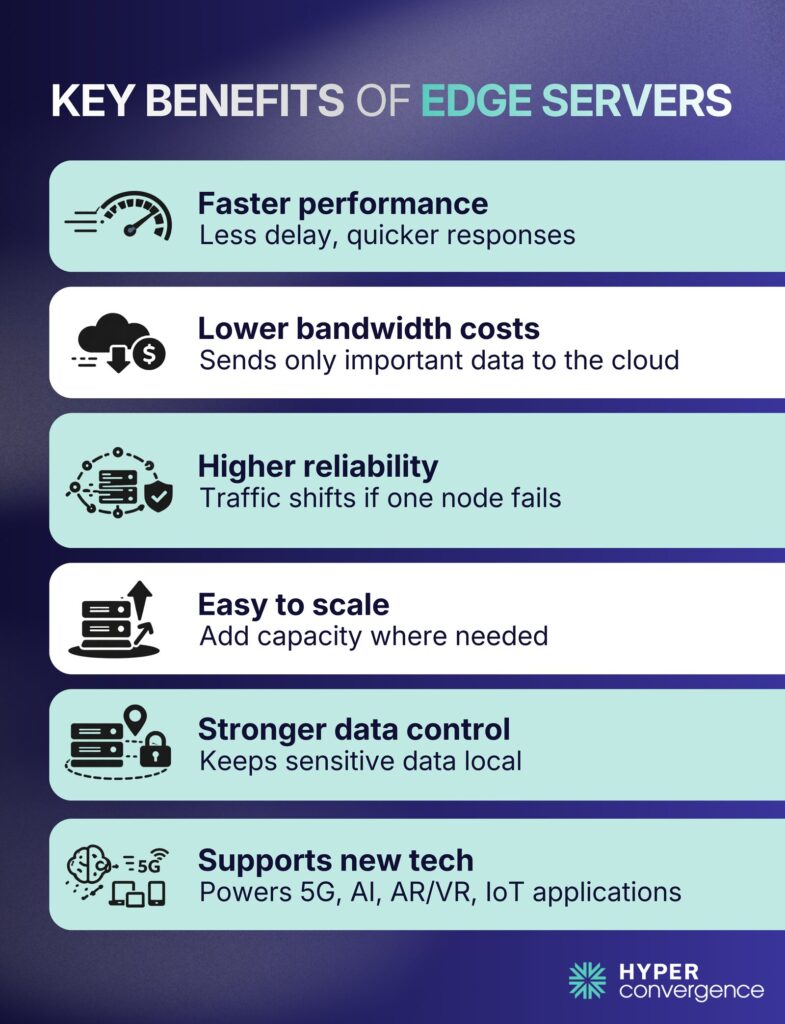

Why Edge Servers Matter: The Key Benefits

More than just a technical buzzword, edge servers solve real business and performance problems, including the following:

Reduced Latency & Faster Performance

By shortening the physical and network distance data must travel, edge servers contribute to minimizing latency and delivering faster response times. In applications like multiplayer gaming, autonomous vehicles, or augmented reality, delays of even a few milliseconds can degrade user experience or cause safety issues.

Market research shows that using edge infrastructure can reduce the distance data travels by 60–85 % and cut bandwidth loads by 35–50 %. These improvements translate into smoother streaming, quicker transactions, and more responsive applications.

Lower Bandwidth Costs

Instead of sending massive volumes of raw data to the cloud, edge servers filter and compress information, which in turn conserves bandwidth.

For example:

- A smart factory camera may generate terabytes of footage daily.

- The edge server analyzes video locally.

- Only alerts or relevant segments are sent to the cloud.

This lowers bandwidth usage and cloud storage costs.

Increased Reliability & Availability

Edge deployments distribute workloads across multiple nodes. If one node fails, traffic can be rerouted to nearby nodes without significant performance degradation. This enhances overall system reliability and ensures higher availability during outages or maintenance windows. In a CDN context, caching at the edge also protects origin servers from DDoS attacks and reduces the risk of congestion.

Scalability & Flexibility

Edge servers allow organizations to scale computing resources incrementally and geographically. They can be deployed in response to localized demand and can be upgraded or expanded as workloads grow, offering scalable solutions.

Hardware‑as‑a‑Service (HaaS) models are emerging, enabling businesses to pay for edge hardware via subscription instead of a large upfront capital expenditure. This trend mirrors cloud economics and lowers barriers to adoption.

Enhanced Security and Compliance

Processing data locally reduces exposure to broader outside networks and allows sensitive information to be stored within specific geographic boundaries. This supports compliance with data sovereignty regulations, such as the European Union’s General Data Protection Regulation (GDPR).

Edge servers can also host web application firewalls and other security functions close to where data is generated; in order to detect and mitigate cyberattacks at the edge.

Support for Emerging Technologies

Edge servers enable real-time data processing, integrate with AI and IoT, and support industrial equipment and other critical systems in industrial settings and harsh environments. They complement technologies like 5G networks, artificial intelligence (AI), virtual/augmented reality, and Industry 4.0. Telecom providers use multi‑access edge computing (MEC) servers at base stations to support 5G applications with ultra‑low latency.

AI models trained in the cloud can run on edge hardware to provide real‑time inference for predictive maintenance or video analytics. As these trends converge, edge servers become critical for delivering immersive and intelligent experiences.

Edge Security and Reliability Best Practices

Edge introduces more sites and more systems. That raises operational demands. As edge servers are distributed across multiple locations, proper design is critical.

Security Practices That Fit Most Deployments

- Identity and access controls for admin access and automation keys

- Encryption for data in transit and data at rest

- Patch management and version control for edge software and OS updates

- Monitoring and logging that covers edge nodes and central services

- Segmentation so that an edge compromise does not expose core systems

Reliability Practices

- Multiple edge nodes per region for redundancy

- Health checks and automated routing away from degraded nodes

- Failover plans for connectivity loss at the edge site

- Local buffering for sensor streams and store-and-forward patterns where needed

The best edge setup treats edge as production infrastructure, not as an experiment.

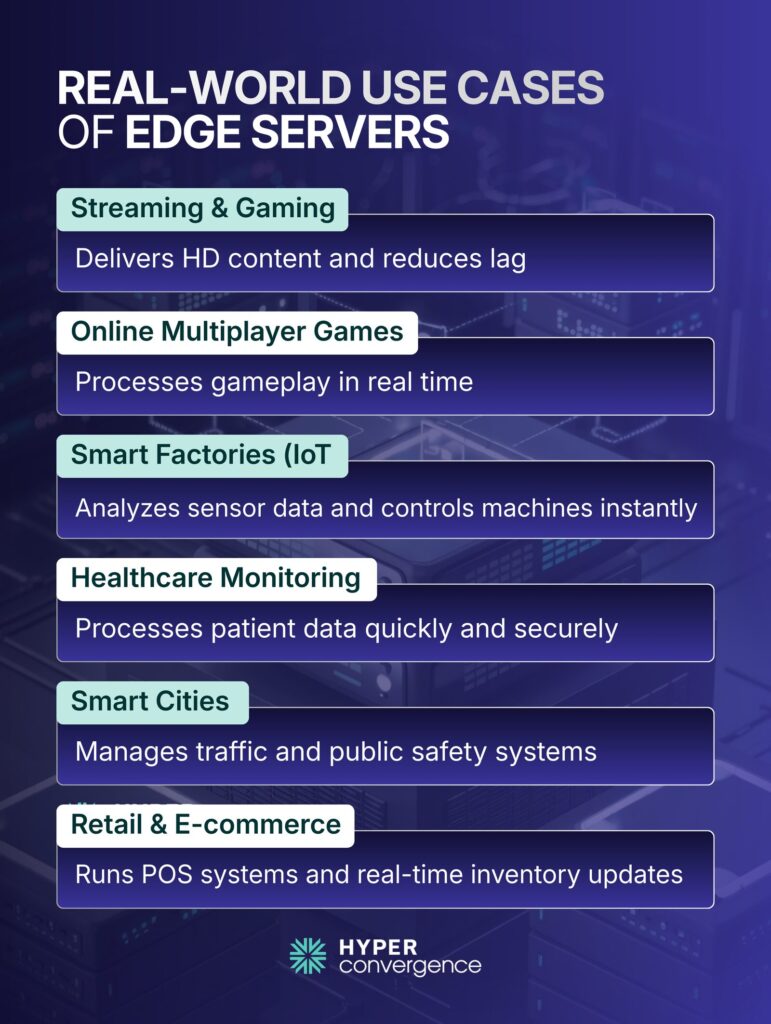

Real‑World Use Cases & Industry Adoption

Edge servers are used in many industries because they address real performance challenges. Here are the key industries they are capturing day by day:

- Streaming and content delivery: Video streaming platforms and live gaming services cache popular content on edge servers to minimize buffering and deliver high‑definition media to global audiences.

- Online gaming: Multiplayer games rely on edge servers to process player interactions and physics calculations in real time, reducing lag and ensuring fair gameplay.

- Industrial IoT and smart manufacturing: Sensors in factories and warehouses generate massive amounts of data. Edge servers perform local analytics and control tasks, triggering maintenance actions or stopping machinery if anomalies are detected.

- Healthcare monitoring: Edge servers process patient data from wearables or medical devices locally, enabling rapid interventions and maintaining compliance with privacy regulations.

- Smart cities and traffic systems: Video feeds from cameras and sensors are analyzed at the edge to optimize traffic lights, detect incidents, and support public safety. In many metropolitan zones, telecom providers deploy 8–14 edge nodes per metro area to deliver sub‑10 ms latency.

- Retail and e‑commerce: Stores use edge servers to run point‑of‑sale systems, manage inventory analytics, and personalize customer experiences without sending every transaction to the cloud.

Adoption Statistics

- Over 60 % of enterprises have integrated edge computing into their core IT strategies.

- In North America, around 62 % of organizations are using edge technologies for IoT and real-time analytics.

- Telecom operators (~64 %) are deploying edge infrastructure alongside 5G networks.

- Multiple industries, including manufacturing, healthcare, and smart cities, account for the majority of edge computing deployments.

These figures underline that edge computing has moved from niche experiments to mainstream deployments.

Types of Edge Server Deployments

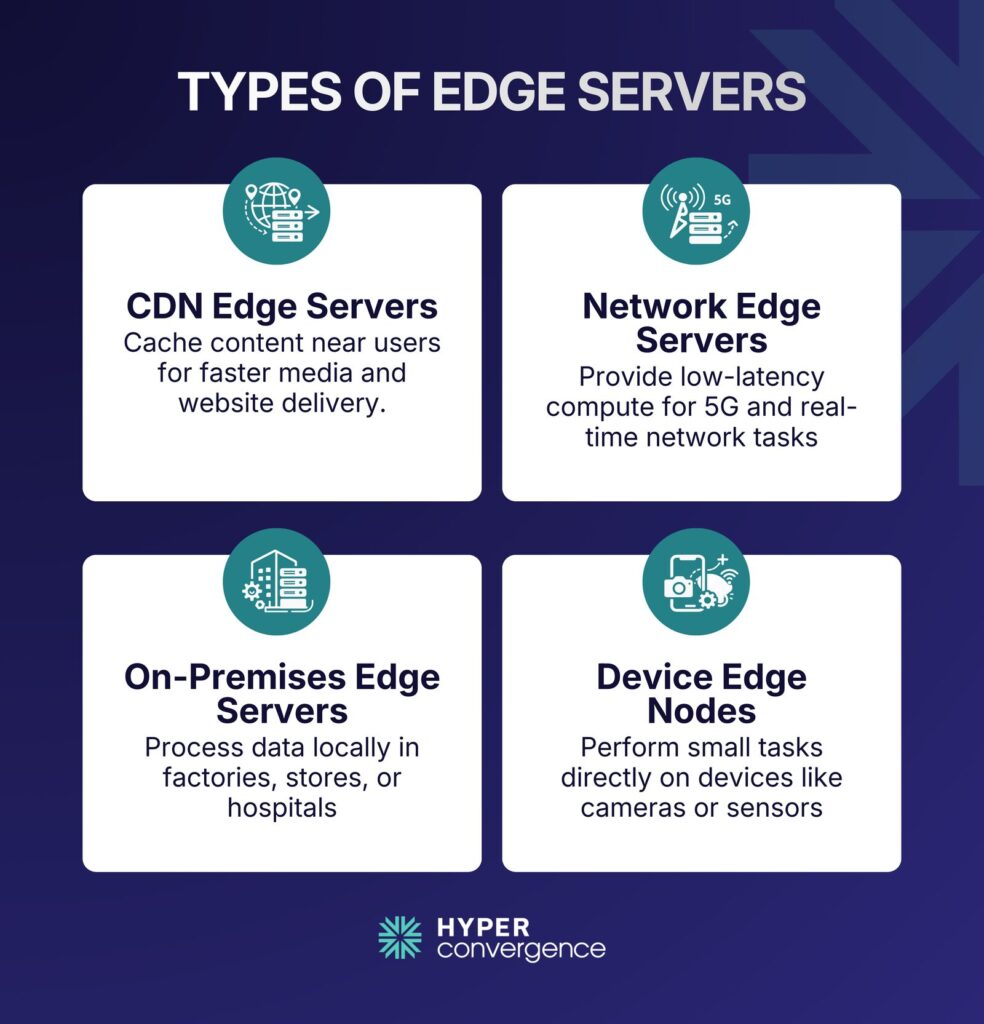

Edge infrastructure spans a spectrum of deployment models, each tailored to specific workloads and environments. STL Partners categorizes edge servers into four primary types:

1. CDN Edge Servers

These nodes reside at regional PoPs and cache static content for websites, streaming platforms, and software distribution. They are optimized for high throughput and low latency to support media delivery and handle traffic spikes.

Typical traits:

- Cache static assets such as images, scripts, and video segments

- Reduce load on origin servers

- Improve responsiveness for geographically distributed users

Common use cases:

- Media streaming

- News sites

- E-commerce catalogs

- Software downloads

2. Network Edge Servers

Deployed in telecom central offices or local mini data centers, network edge servers provide compute resources for 5G networks, real‑time analytics, and network function virtualization. They often operate in ruggedized enclosures and support low‑latency network slices.

Typical traits:

- Support low-latency services near mobile or broadband users

- Often align with multi-access edge computing concepts in 5G-related plans

- Help support localized compute for carrier networks

Common use cases:

- Mobile experiences requiring low latency

- Local breakout for traffic handling

- Network function virtualization workloads

3. On‑Premises Edge Servers

Installed within factories, retail stores, hospitals, or remote facilities, these servers run enterprise applications and control systems locally. They may connect to a central cloud for updates or analytics, but provide critical decision‑making at the site.

Typical traits:

- Handle local processing even if the wide-area link is unstable

- Keep sensitive data local where needed

- Integrate with operational technology and enterprise systems

Common use cases:

- Industrial monitoring and control

- Retail store systems

- Hospital equipment telemetry

- Warehouse automation

4. Device Edge Nodes

In the smallest form factor, edge computing can reside directly on an endpoint device, such as a smart camera or sensor. These nodes perform simple processing tasks like filtering data, running inference models, or communicating aggregated results upstream.

Typical traits:

- Smaller footprints

- Tight power and compute limits

- Optimized for specific tasks such as filtering, inference, or control loops

Common use cases:

- Smart cameras

- Industrial sensors

- Robotics components

- Vehicles and fleets

Choosing the Right Edge Server Hardware

Not all edge servers are designed for the same workloads. Hardware choices depend on what the system needs to process.

Common options include:

- Standard rack or compact servers for general applications

- GPU-powered systems for AI and video analytics

- FPGA or ASIC-based hardware for ultra-low-latency telecom workloads

- Ruggedized servers for factories or outdoor deployments

Some vendors now offer subscription-based hardware models, allowing organizations to deploy edge infrastructure without high upfront costs.

When planning edge deployments, consider latency requirements, power limits, environmental conditions, and remote management capabilities.

Hardware decisions directly impact performance and long-term operating costs.

Edge Servers and Emerging Technologies

Edge servers are tightly linked with several modern trends, including the following:

- 5G and Service Provider Edge

As mobile networks add capacity and reduce latency, applications that demand responsive computing often need nearby processing to match network speed. Edge servers in provider environments support this trend through localized compute and routing.

- AI at the Edge

AI inference workloads often run at the edge when:

- The response must be fast

- The data is too large to move continuously

- Privacy rules limit central transfer

- Connectivity is inconsistent

Grand View Research reports that the global edge computing market is estimated at USD 23.65 billion in 2024 and projected to reach USD 327.79 billion by 2033, with a 33.0% CAGR from 2025 to 2033.

It also reports hardware as a major part of this market, with the hardware segment accounting for over 42% of revenue share in 2024.

These market signals align with rising demand for edge hardware that can run inference close to users and devices.

- Industry 4.0 and Operational Automation

Automation systems in manufacturing and logistics often require real-time decisions. Edge servers help process machine telemetry and operational signals close to the line.

Edge Server Market Insights & Growth Projections

The edge server market is expanding rapidly as organizations seek low‑latency computing and localized data processing. However, estimates vary across research firms, reflecting different methodologies and scope. Here are key trends backed by market research:

- The global edge server market was valued at approximately USD 8.64 billion in 2025, projected to grow to USD 136.25 billion by 2035 (CAGR ~31.76%).

- Over 55% of industrial automation and telecom systems now rely on edge deployment for faster decision‑making and efficiency.

- Edge servers are increasingly used in retail, healthcare, and smart infrastructure, each accounting for significant adoption rates within its sector.

These numbers show that edge servers aren’t just a niche trend; they are mainstream infrastructure components for performance‑driven applications.

IoT Device Growth as a Key Driver

As endpoints grow, demand for localized processing grows too.

IoT Analytics reports:

- 18.5 billion connected IoT devices in 2024

- 21.1 billion expected connected IoT devices by the end of 2025

- 39 billion projected connected IoT devices in 2030

- 13.2% CAGR from 2025 through 2030 for connected IoT devices (source)

That growth increases the need for IoT edge computing patterns that filter, process, and act locally.

Challenges and Considerations

While edge servers bring clear benefits, organizations should be aware of technical and operational considerations. Here are the key challenges in the deployment of edge servers:

Integration Complexity

Edge systems must integrate with cloud tools, security tools, and existing applications. Multi-vendor environments can add friction.

Distributed Security Management

More locations can mean more attack surface. Security practices must cover:

- Remote access

- Patch cycles

- Secrets management

- Monitoring and incident response

Operations and Lifecycle Management

Edge sites can be harder to service than data centers. Teams need plans for:

- Remote monitoring

- Spare parts and replacements

- Standard images and configurations

- Clear ownership between IT and operations teams

When Edge is a Weak Fit

Edge is not always the right choice. It may be a weak fit when:

- The workload is not latency sensitive

- The workload needs very large centralized datasets in real time

- The edge site cannot support power, cooling, or physical security needs

- The operational cost of many sites outweighs performance gains

Therefore, a hybrid plan often solves these tradeoffs.

Comparison: When to Use Edge Server vs Cloud Server

Choosing between edge and cloud servers depends on workload characteristics. The table below summarizes typical scenarios:

| Scenario | Edge Server | Cloud Server |

| Real‑time processing & low latency | Ideal: Edge servers provide immediate responses for use cases like gaming, AR/VR, industrial control, and autonomous vehicles. | Limited: Cloud latency may be too high for time‑sensitive applications. |

| Global user reach | Possible with CDNs: Edge servers in combination with CDNs can deliver content locally to global audiences. | Suitable: Cloud servers can reach global users through distributed data centers, but may rely on CDNs for latency. |

| Heavy compute & long-running tasks | Some limitations: Edge nodes often have limited resources; heavy computation may require a connection to the cloud. | Strong: Cloud servers provide virtually unlimited compute and storage for tasks like AI training, batch analytics, and large‑scale data processing. |

| Cost efficiency | Efficient for local caching: Reduces bandwidth costs and avoids sending unnecessary data to the cloud. | Potentially higher: Data transfer and storage costs can be higher when all data is processed centrally. |

| Regulatory compliance & data sovereignty | Favorable: Data can remain within geographic boundaries and comply with local regulations. | Potential issues: Data may cross borders depending on server locations and cloud provider policies. |

In practice, organizations often adopt a hybrid architecture, combining edge and cloud resources. For example, a factory might run real‑time control loops on an on‑premises edge server while sending aggregated metrics to a cloud platform for long‑term analytics and machine learning model training. Hybrid models allow enterprises to leverage the strengths of both paradigms.

Conclusion

Edge servers represent a fundamental shift in how modern infrastructure is designed. By placing compute and storage closer to users and devices, an edge server reduces latency, improves performance, and supports real-time processing in ways centralized cloud environments alone cannot.

Industry data reinforces this shift. IDC forecasts global spending on edge computing services at nearly $261 billion in 2025 and $380 billion by 2028, at a 13.8% CAGR. At the same time, IoT Analytics projects 39 billion connected IoT devices by 2030, driving demand for localized processing and edge computing architectures.

In reality, edge and cloud servers are complementary rather than competing technologies. They work best together. Edge handles fast, local workloads. Cloud handles large-scale storage and heavy computing.

Understanding what an edge server is, and how it differs from a cloud server or CDN helps organizations design low-latency, distributed systems. Edge servers power real-time applications, IoT edge computing, and AI workloads near users, while cloud platforms handle large-scale storage and heavy processing. Together, they form hybrid architectures that balance speed, scalability, and resilience.

FAQS

An edge server is a computing device placed near end users or data sources in various locations to handle local data processing. It reduces latency, improves real-time decision making, and ensures faster content delivery, unlike centralized traditional servers.

In cloud computing, an edge server extends cloud resources closer to users, handling local tasks while connecting to central clouds. This compute convergence enables real-time data processing and critical applications without relying solely on distant data centers.

Edge servers differ by operating near users, reducing latency, and enabling faster decision making. Traditional servers rely on centralized data centers, which can cause delays for real-time decision making or critical applications.

A CDN caches content globally to improve delivery speed, while an edge server not only caches but also performs local data processing and supports critical applications. Edge servers provide real-time decision making, whereas CDNs mainly handle static content.

An origin server hosts the main data and content, while edge servers handle requests at the next nearest point to users. This reduces load on the origin and conserves bandwidth for faster, localized access.

Cloud computing centralizes storage and processing, whereas edge computing distributes tasks to physical locations near users and end devices, enabling real-time data processing and supporting critical applications in industrial settings.

Yes. Edge servers can run critical applications locally, processing data outside networks when cloud access is unavailable. This is vital in harsh environments or industrial settings for uninterrupted operation.

Edge servers connect with end devices and other devices in IoT networks, performing real-time data processing close to sensors. This supports faster responses, predictive maintenance, and industrial equipment monitoring.

AI applications can run on edge servers near devices to analyze data locally. This reduces latency for real-time decision making in areas like robotics, autonomous vehicles, and industrial equipment intelligence.

Industries with industrial settings, smart cities, healthcare, retail, and industrial equipment operations benefit most. Edge servers improve speed, reliability, and real-time data processing for critical applications.

Edge servers play a key role by reducing latency, supporting real-time data processing, and enabling critical applications. Their distributed nature allows faster service delivery and improves resilience for modern IT systems.

Yes. By processing data near physical locations and end devices, edge computing reduces round-trip delays compared to centralized cloud computing, supporting real-time decision making and critical applications.

No. Edge computing refers to the distributed deployment of servers for local data processing and low-latency services. Microsoft Edge is a web browser. The terms are unrelated except for shared naming.

Tamzid is a distinguished SEO writer with over six years of experience specializing in IT, technology, data centers, and cybersecurity. His expertise extends to hyperconverged infrastructure, where he delivers well-researched, high-ranking content that bridges technical accuracy with reader clarity. With a deep understanding of both search optimization strategies and advanced IT concepts, Tamzid produces authoritative material that engages industry professionals and decision-makers alike.